Assumed Audience: Programmers interested in GUIs.

Discuss on Hacker News and Reddit.

Epistemic Status: Fairly confident, but these ideas are not implemented.

Introduction

As I mentioned in my previous post, I am losing my motivation to continue programming as a hobby. I am also losing my free time; the economy is too poor to spend labor on things that cannot provide income.

And that is discouraging by itself; I have so many ideas, most of them focused on reversing terrible trends in the industry.

So in this last gasp post, the final post I will write on my ideas (I think), I will focus on fixing one of the worst trends: the bloating of GUI frameworks.

The most popular framework now is Electron, and it is literally a Chrome package. We are packaging a full browser to run single GUI programs.

This is a terrible trend! Chrome is controlled by Google, a Big Tech company that does not have our interests at heart. It is also fat; a single Electron-based program can take multiple gigabytes of RAM.

Of course, there are reasons for this: Electron is cross-platform with some accessibility and animation features. The only other framework that comes even close is Qt, and it is not completely free.

In addition, all frameworks seem to have some of the same problems.

So here is a design to fix some of those problems; I hope others will be able to implement them.

Are you lazy and just want the actual new architecture? Go here.

Nonrequirements

First, we have to figure out what is not a requirement.

And in my eyes, there’s only one: native widgets.

Yes, native widgets would be nice, and people do expect them.

However, Qt and Electron are now big enough that people expect them less than they used to.

In addition, each widget becomes a portability problem by itself. Native widgets don’t act the same across platforms, and some platforms may not have some widgets that others do.

In other words, without native widgets, our cross-platform work would include graphics, sound, events, accessibility, and maybe a few other things. With native widgets, we would need to do all of that plus each supported widget.

It would be less work to create our own widgets because there are at least 3 platforms to support, and having widgets that work the same on every platform would create a consistent feel with programs using the framework.

They would create a consistent look too; the widgets would look the same across all platforms.

This would actually solve platform stupidity, where they destroy affordances for “design” reasons. The framework could ensure that checkboxes are square, with a checkmark, and radio buttons are circles, and that fact would hold even across platform updates.

Yes, native widgets are nice, but for the people that work on multiple platforms, that consistency across platforms will be nice. And as I said, single-platform users are used to programs straying from the native stuff anyway.

In addition, a lot of those widgets are not designed for non-English languages; building our own means that we could do that right.

Requirements

And now, for the list of requirements, in priority order:

- Cross-platform

- Easy

- Performant

- Accessible (as in, accessibility)

- Globalized

- With Standard UI Affordances

- With Standard UX Affordances

- Robust, Reliable, and Predictable

- Flexible

- Programmable

- Discoverable

- Testable

Let’s talk about each of them.

Cross-Platform

Any new framework must take the existing platforms into account. Within a rounding error, no one will build on frameworks that are not cross-platform because there are enough potiential customers on Windows and macOS, not to mention that most customers are actually on mobile!

Yes, as unfortunate as it is, the new reality is that mobile devices are the primary computers for most people. I say this as someone who’s primary device is a custom-built tower with a bespoke Gentoo Linux setup! Even I acknowledge the hegemony of mobile!

I know at least one person who uses Termux to recreate a regular Unix-like experience on their phone.

So perhaps a new framework could get away with just focusing on mobile, and I think that would be sufficient.

But as a desktop user, I’d also like it to support it, so our new framework must work on:

- Windows

- macOS

- Linux X11

- Linux Wayland

- iOS

- Android

- BSDs (as a stretch goal)

But this will make things difficult; handling desktop and mobile means that the framework should adapt to each of those.

For example, the layout engine must have:

- A desktop mode

- A portrait mobile mode

- A landscape mobile mode

It might be necessary to split desktop into landscape and portrait, but it might also work to use mobile landscape mode for desktop portrait.

And when the client delivers the graphical output, which I will call a widget tree, the framework should render that according to the mode.

Easy

It should go without saying, but the framework should make it immensely easy to implement GUI software.

After all, the framework would be up against Qt, which is kind of difficult, and Electron, which is just HTML/CSS, and HTML/CSS is kind of easy!

If using the framework is not at least as easy as HTML/CSS, then it will fail. Yep, that simple.

It should be so easy that you could autogenerate a “Hello, World!” in the framework and build software piecemeal from there.

If programmers can’t start immediately with working code, they may get discouraged, so the framework should give the programmers what they want and show them a window right away.

Performant

For a GUI framework, performance can be split into three parts:

- Throughput

- Latency

- Low Power Use

Throughput

Throughput means that the framework should handle a lot.

“A lot of what?” you may ask. A lot of frames and events.

There may be more, but that’s all I can think of any right now.

My target for frame throughput would be 120+ frames per second (FPS), even under load. If that’s not possible, then 60 FPS would be the minimum.

To push that many frames, the framework should use GPU rendering.

Of course, actual framerate does depend on the GPU, but the framework should be capable of delivering frame data 120+ times a second.

My target for event throughput would be at least one event per frame, maybe more.

Regardless, the framework must have high throughput.

Latency

Obviously, the framework should have low latency, and that means a few different things.

First, the UI thread should never be blocked for even a full frame.

Second, when the user causes an event, that event must be reflected in the graphical output within no more than four frames. One frame is the stretch goal, but that does depend on client code.

For example, say that the framework receives an event from the OS just before a new frame is displayed. That first frame is the first of the four.

So in essence, the four frame limit is actually a three frame limit because of timing.

However, the event could come right after a frame, so that first frame does still need to count in the delay. It just can’t count in the amount of allowable time for processing.

I think the framework must deliver the event to the client within about a tenth of a frame. This gives the client 90% of a frame to work on it and deliver the new widget tree to the framework. This is the second frame.

From there, the framework must do all of its processing of the new widget tree in one frame and deliver the shaders and geometry to the GPU to render. This is the third frame.

At that point, the GPU has one frame to render the shaders and geometry to the screen. This is the fourth frame.

At 120 FPS, a latency of three frames (remember, the fourth is a timing buffer) is only 25 ms. At 60 FPS, it’s 50 ms.

Is that fast enough? Well, according to Dan Luu hitting 50 ms would put the framework in the top 3 for desktops and top 2 for mobile devices. And if we hit 120 FPS and 25 ms, the framework would be the fastest in both categories.

But I think the framework should do its processing of the widget tree in 40% of a frame. This means that if the client can do its event handling in half of a frame, the framework would have an input lag of only two frames, or about 17 ms at 120 FPS and 33 at 60 FPS.

“But Gavin, 120 FPS is too hard!”

No, it’s not. Start watching this video (in fact, watch the whole playlist), and look at the top left.

In that corner is the top of a terminal the presenter wrote. One of the things in the title bar is “RenderFPS,” the number of frames the terminal is rendering per second.

Watch it for a few seconds, and you’ll notice that the average is 7400 FPS! Running at 120 FPS is only 1.6% of that!

People forget how fast computers are nowadays; 120 FPS should be fairly simple. In fact, the presenter’s thesis is this:

People think that, somehow, speed only comes from, like, redoing everything. And it’s true that the very fastest thing, you probably have to do that.

But just using some, you know, just some sensible code and caching, you can turn a slow terminal renderer into a fast terminal renderer.

In other words, it’s easy to make something fast enough if you are just sensible about what you do.

The rest of the playlist describes what sensible means, so go watch it.

But for our purposes, all you need to know is that 120 FPS is possible. In fact, since we are starting from scratch, we are redoing everything, so we can get “the very fastest thing.”

“Gavin, why are you hammering on this? Humans only notice 100 ms of latency!”

Actually, that seems to be wrong, empirically and anecdotally:

I’m surprised the “humans don’t notice 100 ms” argument is even made. That’s trivially debunkable with a simple blind A/B test at the command line using

sleep 0.1with and withoutsleepaliased totrue. To my eyes, the delay is obvious at 100 ms, noticeable at 50 ms, barely perceptible at 20 ms, and unnoticeable at 10 ms.Not to mention that 100 ms is musically a 16th note at 150 bpm. Being off by a 16th note even at that speed is – especially for percussive instruments – obvious.

On the other hand, if you told me to strike a key less than 100 ms after some visual stimuli, I’m sure I couldn’t do it – that’s what “reaction time” is.

And another user agrees:

That’s how it goes with my ears when recording as well. Most “Live feedback” mechanisms for guitar programs (heck even computer-made guitar hardware) have about 50ms of latency, which is quite disconcerting when you’re doing something heavily timing-based. 10ms is almost imperceptible to me (sounds more like the tiniest slightest reverb delay) and 5ms might as well be realtime as far as I’m concerned.

And this matters! The lower the latency, the more accurate user actions are.

Quite frankly, I want to get the delay to 5 ms for a truly realtime feel, which if we go by a max four frame delay means that we would need to run at 800 FPS.

Of course, as another user says, the 10 ms noticeable delay is probably because it’s sound, so what if we go by the 10 ms unnoticeable delay from the first user?

That is 400 FPS with a max four frame delay. That’s the target I would actually want to hit, so personally, I would make the framework respond fast enough to hit 400 FPS.

From there, it would only depend on the client. If the client is fast enough, maybe an event could come in, be handled, be processed, and rendered within one frame; at 120 FPS, that’s just over 8 ms, below the magical 10 ms threshold.

So when I say that the framework must deliver an event in 10% of a frame and do its processing in 40% of a frame, I mean a frame at 400 FPS, or 250 microseconds and 1 ms, respectively.

That leaves 7 ms for client event handling and frame rendering when running at 120 FPS.

It’s possible, but clients would need to work for it. But the important thing is that the framework gives them that option; if it took several frames to do its thing, clients would be stuck with poor latency. That would be unacceptable.

But what if users want to create a painting program in the framework? How low should the latency be?

The answer: a mere 1 ms. Watch that video closely, and see how wildly different it feels to go from 10 ms to 1 ms. If it were possible, I would want the framework to deliver events in 100 nanoseconds and do all of its processing in maybe 10 microseconds, leaving 989 microseconds for client event handling and rendering.

Barring keyboard latency, of course. Nothing we can do about that.

One millisecond latency is a dream, but I would love to see it. And I think it’s possible, even for clients, albeit only in the case where the event doesn’t redraw the entire screen.

So the framework must have low latency. In fact, I would put low latency as a higher priority than high throughput.

Low Power Use

While the framework should be fast enough for low latency and high throughput, at high and continuous load, that doesn’t mean it should be drawing new frames every chance it gets.

It should only redraw when it has to, and only the section(s) of the screen that needs redrawing. This will reduce the amount of heavy CPU and GPU power use, maximizing battery life on mobile devices and laptops.

This includes skipping unneeded updates during animations so that power isn’t continuously burned. Blinking cursors should not take 13% CPU.

This means that the framework should be event-driven, not loop driven, though events could include timers for things like animations or blinking cursors.

Accessible

Like sidewalk ramps, which are for wheelchairs but are useful for strollers and bikes, making things more accessible makes those things more useful for everybody.

Plus, those who need it wouldn’t be able to use computers without it:

This isn’t just some, like, weird software that’s funny to hear and interesting; it’s literally what gives me access to my career and to my computer.

Think about what it would be like if tomorrow, you lost access to your computer. How much of your life is in that device, right?

– Emily Shea, “Voice Driven Development: Who Needs a Keyboard Anyway?”

So the framework should be built from the beginning for accessibility.

From first release, the framework should work with the accessibility APIs exposed by operating systems. This would mean that screen readers should work.

The framework should also make things fully navigable from the keyboard. Of course, clients will have to do some work here, but the framework should make that work easy; if clients have to fight the framework for accessible navigation, it has failed.

And in the theme of accessibility making things easier, work keyboard-first navigation also helps expert users who need speed.

The framework should also be themable, and by both clients and users.

For example, since I am partially color blind, I prefer heavily saturated color schemes, which is why this website is the way it is.

If users could theme the framework, that would take away a lot of the accessibility issues regarding color and visuals.

To do that, clients would need to define what colors they need, and the framework should let the user set those colors.

Obviously, the framework should include a base set, including such things as the colors (or gradients) of unpressed buttons, the colors (or gradients) of pressed buttons, the color of the check in checkboxes, the background color, etc.

But how should the framework implement accessibility?

Well, I think it should take hints from AccessKit, which does this:

Because Chromium has a multi-process architecture and does not allow synchronous IPC from the browser process to the sandboxed renderer processes, the browser process cannot pull accessibility information from the renderer on demand. Instead, the renderer process pushes data to the browser process. The renderer process initially pushes a complete accessibility tree, then it pushes incremental updates. The browser process only needs to send a request to the renderer process when an assistive technology requests one of the actions mentioned above. In AccessKit, the platform adapter is like the Chromium browser process, and the UI toolkit is like the Chromium renderer process, except that both components run in the same process and communicate through normal function calls rather than IPC.

Thus, in AccessKit, the client and framework push data to the accessibility API.

I think that’s a good idea, so let’s do that.

But we’ll come back to that idea later.

Globalized

The framework should be built from the ground up to support globalization.

This means:

- Internationalization

- Localization

- Layouts

Internationalization

Internationalization is about having translations for every bit of text. This is the easy, if tedious, part.

But the framework should ensure that this is possible through pure data, not code. This includes times when messages include bits from the software itself; if those bits cannot be put in any spot in the message, that’s a fail.

Sure, passing those bits into the UI does need code, but the framework should take care of putting things into the right order.

Internationalization is also about being able to render any language. This is the hard part. But it must be done.

Finally, it should support input method editors.

Localization

Localization is about matching the conventions of the user’s language and country.

How do they render numbers? The framework should match that.

How do they render currency? The framework should match that.

How do they render dates? The framework should match that.

You get the idea.

This stuff isn’t hard either, but it is tedious. Nevertheless, it is crucial to making a framework that programmers will use.

Layouts

So for some reason, there are languages that don’t read left-to-right, top-to-bottom. Crazy, right?

Apparently, some programmers forgot about that. Let’s not make that same mistake.

The framework should be capable of the following layouts:

- Left-to-right, top-to-bottom (like most European languages).

- Right-to-left, top-to-bottom (like Hebrew).

- Top-to-bottom, left-to-right (like Mongolian).

- Top-to-bottom, right-to-left (like Japanese).

In fact, because we need to worry about desktop and mobile layouts, the framework needs to support the Cartesian product of the above layouts and desktop and mobile:

- Left-to-right, top-to-bottom on desktop.

- Right-to-left, top-to-bottom on desktop.

- Top-to-bottom, left-to-right on desktop.

- Top-to-bottom, right-to-left on desktop.

- Left-to-right, top-to-bottom on mobile landscape.

- Right-to-left, top-to-bottom on mobile landscape.

- Top-to-bottom, left-to-right on mobile landscape.

- Top-to-bottom, right-to-left on mobile landscape.

- Left-to-right, top-to-bottom on mobile portrait.

- Right-to-left, top-to-bottom on mobile portrait.

- Top-to-bottom, left-to-right on mobile portrait.

- Top-to-bottom, right-to-left on mobile portrait.

Hoo boy! That’s a lot of work!

But designing for them upfront will make it less work than it could have been.

With Standard UI Affordances

Okay, the name of this requirement sucks, but what I mean is that the framework should support the graphical niceties that people are used to.

This includes:

- Multiple windows in the same program.

- Multiple windows across multiple monitors.

- DPI scaling.

- Color, including gamma correction, color balance, HDR, etc.

- Animations.

- Proper VSync.

- Double buffering.

- Any that I might have forgotten.

Bonus points if the UI can be split into sections like Blender’s Areas.

One sticky point is dialog boxes, but here too, I think Blender has the solution: instead of creating dedicated windows for dialogs, Blender just renders an overlay on top of the UI for a dialog box. The framework should support something like this to avoid platform-specific differences regarding dialog boxes, especially with focus.

With Standard UX Affordances

There are standard things that users expect GUIs to have, and they expect these things because of things like the Apple Human Interface Guidelines (AHIG), both old and new, as well as an incredibly old IBM guide called Common User Access (CUA).

At the request of the author of the article about CUA, I reconstructed the two parts of the guide:

CUA is probably slightly better than AHIG, so the framework should adopt as much of it as possible by default. Then it should follow AHIG where possible, and finally, if absolutely necessary, the framework can stray.

But really, those affordances are expected, so they should be met if at all possible.

In addition, the framework should make it easy for clients to use

platform-specific shortcuts, such as using cmd on macOS vs ctrl on other

platforms, as well as treating the mouse the way the platform wants.

This also goes for implementing widgets to behave how the platform-native ones usually do. Yes, that brings up the same portability problems, but with the widget under the control of the framework, those problems can be minimized.

For mobile, the framework should make it easy for clients to use presses and long presses, and bonus points if it allows clients to treat them the same as left and right mouse buttons.

The framework should handle sound, not just graphics.

The framework should help with copy+paste. It should also help with drag and drop.

And finally, the framework should have provisions for different input devices like joysticks, 3D mice, drawing tablets, eye trackers for accessibility, etc.

Robust, Reliable, and Predictable

If you’re here just for the titular new architecture, we have finally reached that point.

I apologize for the longer explanation, but I think more people will understand it if I talk about the design path I took.

I am building a VCS. People want a GUI for that VCS. I don’t have time to make a GUI, and I don’t want to depend on either Electron or Qt. So I decide to hack it and either make a TUI or a web UI (local server a local browser).

I’m afraid I’ve been thinking. A dangerous pasttime, I know.

And it certainly wasn’t helped by a recent Hacker News post about interesting TUIs.

Sometimes HN threads can go in awesome directions, such as this one where a user suggests going to Lowes or Costco and watching their employees work on their internal TUIs and how fast they are on them.

People started talking about how user interfaces used to all be like that, and someone complained about mice.

It’s not Reddit, but it’s still HN.

And then electroly piped in with a Hacker News gem:

It’s part of it but not all of it. Today’s modern UIs won’t buffer keystrokes as you move between UI contexts. You often have to wait for a bit of UI to load/appear before you can continue, or else your premature keystroke will be eaten or apply to the wrong window. This kills your throughput because you have to look at the screen and wait to recognize something happening. Even if you have all the keystrokes memorized, the UI still makes you wait.

In a traditional TUI you can type at full speed and the system will process the keystrokes as it is able, with no keystrokes being lost. If a keystroke calls up a new screen, then the very next keystroke will apply to that screen. Competent users could be several screens ahead of the computer, because the system doesn’t make them wait for the UI to appear before accepting the keystrokes that will apply to that UI.

– electroly on Hacker News (emphasis added)

And I sat and thought about that comment for a good 20 minutes, and even more as I went about the rest of the day.

‘Yes, TUIs can do that,’ I thought, ‘but why can they do that?’

And then I realized the simple answer: TUIs buffer their outputs just like they buffer their inputs.

Of course, most people don’t realize this because the buffering is implicit, but it does exist.

The buffer is the stdout buffer!

TUIs work by setting the terminal to a special mode, and then just writing bytes

to stdout to “render” the UI. Obviously, the terminal is actually interpreting

those bytes (including terminal escape codes) and actually printing stuff to the

screen, but the program only needs to write those bytes to stdout.

And since the program decides what is rendered (by what it prints to stdout),

it “knows” the current UI state, even if it is not rendered yet! In fact, the

“renderer” (terminal) doesn’t care about the UI state.

This is why inputs are not lost and why the result is always accurate.

“Okay, Gavin, but why is that so important? If outputs are not buffered, then things are slower, but that’s not a big deal.”

Au contraire! It’s a HUGE deal! People have died because outputs were not properly buffered in a UI, and the clue to that is in one of the replies to electroly:

Reminds me of https://en.wikipedia.org/wiki/Therac-25

Username checks out.

Therac-25 tl;dr

The Therac-25 was a radiation treatment machine (for cancer). Its predecessor had hardware interlocks, but those were removed on the Therac-25 for software interlocks.

The software was not perfect, and those interlocks could be bypassed by fast operators who got ahead of the machine. In essence, inputs were buffered, but outputs weren’t, and thus, the interlocks could be bypassed to give a fatal radiation dose.

Suffice it to say that three people died because outputs were not buffered.

So I sat thinking about TUIs and how they buffer their outputs, and I wondered how it could be done in a framework.

Then my mind turned to the other UI option for my VCS, a web UI, and I realized that web UIs have something in common with TUIs: the renderer does not care about the UI state! All the renderer, the browser, cares about is the HTML/CSS.

What is the common thread? Easy: in both cases, the client pushes data to the renderer, and the renderer just receives the data and renders it without comment.

Existing GUI frameworks are mostly opinionated; they build the UI from what the client tells them, and they store the state. In that situation, buffering outputs is impossible.

The solution is to move the state to the client and have the framework act as a dumb renderer. The client should push data to the renderer, and the renderer should render it, nothing more.

I have decided to call that data widget trees because it’s a set of widgets and how they relate to each other. And also their state.

But that’s not sufficient; it turns out that in TUIs and web UIs, the client will push completely new data for every new state, and that is an important detail: every time the renderer has to render something, the client needs to push a new widget tree.

That can be expensive, so it would be great it if we could implement widget trees with copy-on-write. And then the renderer could just implement pointer/index comparison to see if a widget changed.

If a widget changed, its parent should change also, and children should be checked for every changed widget in the renderer to figure out the smallest “dirty” screen area(s).

With this copy-on-write feature, widget trees become versioned widget trees (VWT), and this is what clients should push to the renderer.

This means that clients, when dealing with events, can always use the latest version of the widget tree and apply the next event to that version, never losing or dropping events and never causing race conditions that may kill someone.

In addition, each widget tree, or just the changes, could be processed and then pushed to the accessibility code since that will use the same architecture!

The end result will be that all clients will be robust, reliable, and predictable, because this architecture is a pit of success, and accessibility will simply be a matter of data transformation.

And both accessibility processing and rendering could happen on separate threads from the client event loop, which will improve throughput because the client never has to give up control of the UI/event thread to the framework.

Latency is still a problem, however.

There is one more advantage of this VWT architecture, but to explain it, I need to talk about why the framework needs to be…

Flexible

I mean a few things when I say that the framework should be flexible.

First, clients should be able to define their own widgets. That kind of goes without saying. And those widgets should work on all platforms.

Second, the framework should support both retained mode and immediate mode.

Yes, I mean it because both have their advantages.

Immediate mode is good for flexibility, and since that’s the name of this requirement, it’s necessary.

However, immediate mode is also CPU- and GPU-heavy; it is constantly running. When immediate mode is required, it should be used as little as possible, for a single widget if possible.

“But Gavin! That gets rid of the biggest advantage of immediate mode: that there is no separation of state between the client and the framework!”

Ah, but you are wrong! We still have that advantage!

Remember the architecture we designed above; in this architecture, where the client builds a versioned widget tree and passes that to the framework, the client is the only one with the master state.

The framework just takes the latest version of the widget tree, which is the latest state, as input and acts as a purely functional transform on that state. It doesn’t store it, it doesn’t manipulate it, and it doesn’t use it to update its own state because it owns no state.

Instead, it takes the client’s state, the versioned widget tree, and turns it into pixels and speaker flux.

Sure, the framework may provide the data structures for storing state and the code for manipulating it, like for animations, but the place where the state is stored and manipulated is in the client!

With that new architecture, the only advantage of immediate mode is flexibility, so it should be contained to individual widgets, including group widgets, with their own rendering and stuff.

Oh, yeah, this is basically custom widgets, which we already have. But to make it work for immediate mode, we need to make sure these custom widgets can have animations and set how quickly they update themselves.

Because some widgets may need once every half second for a cursor, and some may need 120+ FPS for constant updates. Or anything in-between.

“But that architecture just means the framework is immediate mode since the state is in the client!”

Not quite.

Because while the client has the state, that doesn’t mean the client should have to worry about managing the state; if the framework is done well, it will properly encapsulate the management of that state in its own code that the client can call for that purpose, and the client can remain oblivious to everything, including the actual data structures that make up the state.

This is one of the advantages of retained mode: the client can remain oblivious. We want to keep that advantage.

Yes, it means that the framework’s state management API needs to be top-notch, but it’s possible, and good API designers exist.

This is why the VWTs are versioned and copy-on-write; once a version is built, it is frozen, and the client can use the old one as the canonical current state. It also can immediately move to making a new version while the framework processes the old version for rendering and sound.

Another advantage of retained mode is that clients can predefine layouts in data, which is usable by non-technical designers, rather than Turing-complete code, which can be unusable by those designers. This still works in this framework because the framework can provide a function that the client can call to load a predefined layout. Easy.

And the final advantage of retained mode is minimal updates. In immediate mode, every frame is drawn, but retained mode can wait for events to redraw, and it can redraw only the part(s) of the screen that changed.

This is another reason why the VWTs are versioned, as well as why there is a buffer of them, and these VWTs are how we ensure the framework uses as little power as possible.

Programmable

This one may seem stupid or out of place. Why does it exist? Why prioritize it over testability of all things?

The answer: programmability is what enables the rest of the requirements, so it’s a higher priority.

And I’m only talking about it first because the requirements are in priority order.

So what is programmability? It is the ability of the end user, not the programmer, to affect the entire program by code.

“But Gavin, didn’t you just say that code can be unusable for non-technical people?”

Yes, I did. And it is true.

But there is some code that even non-technical people understand: commands.

Imagine a program where every GUI item could be manipulated by commands.

Want to press a button? press <button_id>

Want to change a slider? set_slider <slider_id>

Want to set a radio button? set_radio <radio_id> <option_id>

Want to toggle a checkbox? toggle <checkbox_id>

Obviously, the syntax could be different; the point is that the commands could exist.

Of course, the question is: how would the user enter those commands?

The answer is a REPL in a “command console.”

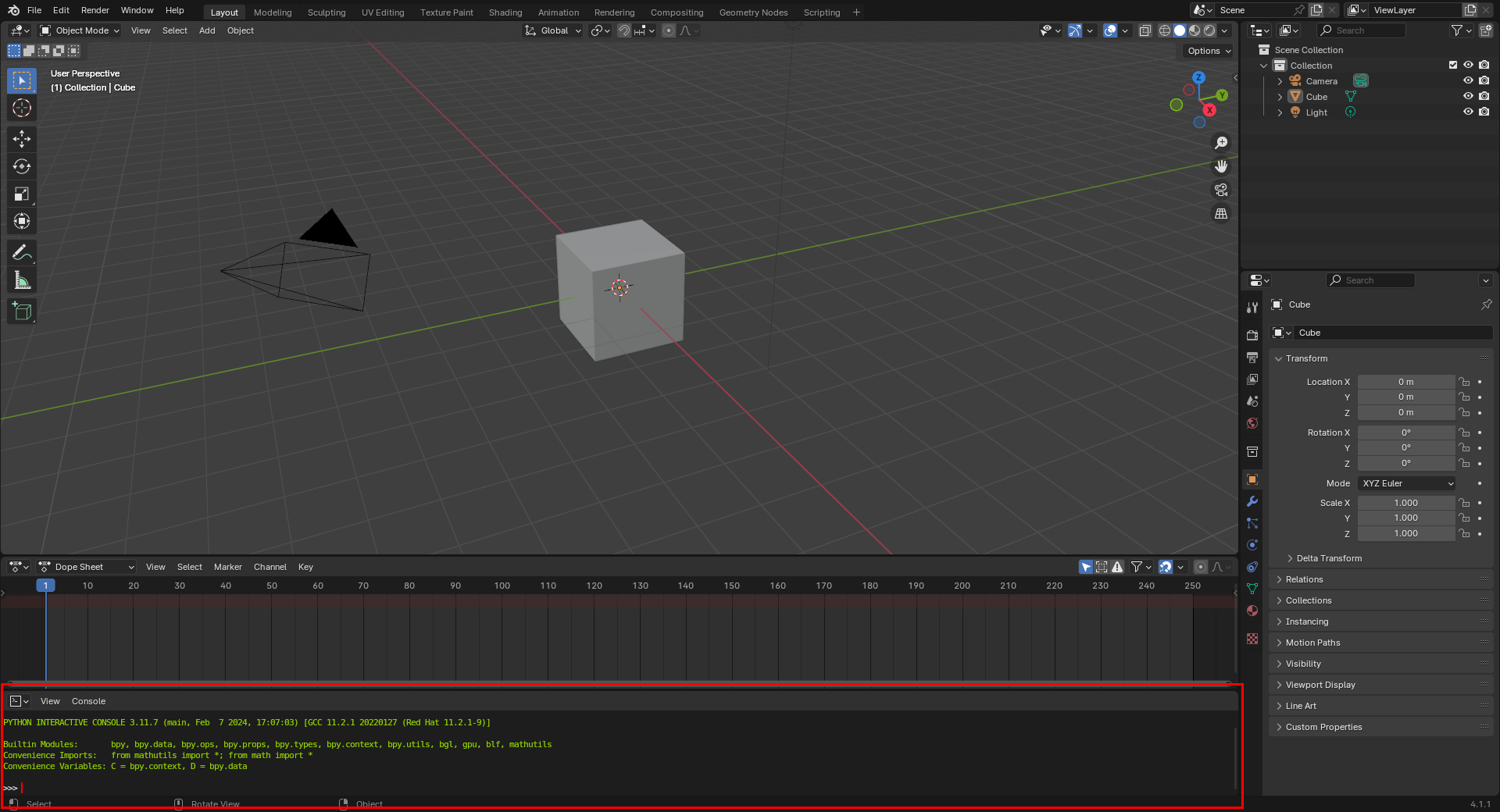

Something like this already exists in Blender; it’s called the Python Console, and it looks like this:

Blender is not a typical GUI program; any item in its interface can be manipulated in Python. This is possible because the Blender developers expose a Python API for its interface.

This means that you can type Python code in the Python Console, and Blender will act as though events were generated for the entities affected by that code.

The framework should do the same thing, although it should generate the API automatically during build. I don’t know how you would do that in typical programming languages, but in my language, adding commands, even in a hierarchy, is easy and can be done at comptime or runtime.

And then if you use a general-purpose programming language, you essentially get a plugin system for free.

But regardless, the framework should make it possible to manipulate all input devices through commands, and those commands should be optimized for speed and ease.

Take the mouse for example. Say a user wants to manipulate the mouse through commands. They might have a few options.

First, if they know the exact coordinates, then there should be a command for that.

But If they don’t know the exact coordinates, perhaps there could be commands to do a binary search-like walk to the correct coordinates, i.e., a walk in both directions.

And for extra ease, maybe the framework could draw vertical and horizontal lines centered at the mouse while those walks are in progress, allowing a walk on one axis, then another.

And maybe there would be a set of commands to move the mouse by one pixel in either direction on each axis.

“That sounds exhausting, Gavin! Too much typing!”

Ah, but what if you can’t type or use the mouse?

Emily Shea is a programmer, and she uses her voice to interact with computers. She has incredible demonstrations of her tools, which include single-syllable words for common operations.

What if she added “gee” and “haw” for doing the binary search walk on the X axis, and then “ree” and “waw” for the pixel-by-pixel movement?

She might say something like this: “Gee, gee, haw, gee, haw, haw, ree, ree”, and she would land on an exact X pixel. Repeat for the Y axis.

Then suddenly, at least for programs written in the framework, she could do anything, including mouse-based programs like digital painting programs.

Yes, maybe that’s still a lot of work, but it’s better than the current status quo, and with full programmability, it would be simple for users to add new techniques to all programs in the framework without filing bug reports against each and every program!

On top of that, a text-based interface is useful for the blind:

“Most terminal user interfaces can be used by blind users pretty easily.”

– “Why the Text Terminal Cursor Is Important for Accessibility”

It makes sense: when your interface is purely words by sound, having all of the interface available through words by text, which can be translated into sound, makes things easier.

So programmability is actually an accessibility feature!

As long as programs follow best practices for accessible CLIs.

But programmability doesn’t just enable accessibility, it also makes programs…

Discoverable

One of the problems with GUIs is that it’s hard for users to figure out what they can do.

This is especially true for beginners.

Well, if the framework includes a standard console, a couple of simple features could aid discoverability:

- Tab completion.

- Command help.

Tab Completion

Text-based programs often offer a feature called “tab completion.”

Perhaps you don’t remember the exact command you want, but you would recognize

it if you saw it. You hit <TAB>, and a list of available commands are printed.

You see the one you want, and you use it.

This feature even works on partially completed commands. Type an a<TAB>, and

only commands that begin with a are shown.

Smart programmers will put commands in a hierarchy, and then users can go to the

console, type <TAB>, and see all of the command categories.

Then they can explore further, diving into each category that interests them. The hierarchy guides their exploration, allowing them to discover things that might interest them.

Command Help

But how do they know exactly what those commands do?

Easy: the console can implement a way to show how to use each command and what UI element those commands are tied to.

And with that, features are easily discoverable. In fact, you can easily discover all features by a full search.

Programs don’t have to remain a mystery.

Testable

Programmability enables the final requirement of testability.

I love tests. I love large test suites. But I only love them if they are fully automated.

And that is why I don’t work on GUI software; automatic testing of GUI software is fragmented, rudimentary, and often, not even an option.

Wouldn’t it be nice if the framework could easily automate tests?

Well, we already have a way of generating events programmatically; we could use that to implement automatic tests by just having the program run some code in the console.

Cool! Now we have automatic tests! We could even program the framework such that it could accept that code from stdin (only in a special test mode), and such tests could be run from shell scripts or other test programs.

However, creating code for every test sounds like a lot of manual work; can we automate the making of the tests too?

Well, what if we added a way for the framework to record all events in an execution? Then we could just replay those events as code.

Then, to make a test, all a user would have to do is record an execution. Legendary users could even do that on bug reports, and developers would have instant reproducers!

Nice!

But what would the tests actually check for?

Easy: they could check the results of the execution (such as changes to a file), and they could check all of the versioned widget trees generated through the execution. Those VWTs could also be recorded (once a bug has been fixed), and so recordings could not only generate the events, but test oracles too.

This includes sound generation, by the way; why not embed sounds to play in the actual VWT since it should include all state? In fact, if VWTs are properly designed, they should include all info about all outputs, so all of that could be used in tests.

And since rendering is separated from the actual VWTs, tests could be run headless.

Though if clients need to test the actual rendering of custom widgets, headless isn’t an option.

Regardless, it’s time for GUIs to be just as testable as other forms of software.

Conclusion

Beyond details inherent to GUIs, this post described a new design and architecture for a GUI framework.

I believe that this architecture combines the advantages of immediate mode (flexibility and a single source of truth) with the advantages of retained mode (encapsulated scenes, predefined UIs, and minimal updates). And I believe its programmability lends itself to serving the needs of GUI developers and all users, whether beginner or expert, keyboard warrior or mouse lover, impaired or otherwise.

Am I right? Well, we can only tell if a framework with this architecture is used to build nontrivial software. This means that someone with good design chops, gobs of time, and Goku motivation must actually implement a framework with this architecture, and others must actually implement software on top of it.

I may or may not have the design chops, but I definitely don’t have the time or motivation.

Go forth and do.

Or maybe pay me to create it. Money would be a great motivator!

Or just let this idea rot. I won’t blame you if you do.